The Hidden Cost of “Serverless by Default” on AWS

Why this matters this week

A pattern keeps popping up in technical post-mortems and slack threads:

“We went serverless on AWS for simplicity and cost savings. Now our bill is weird, our latency is spiky, and nobody understands the platform.”

Serverless on AWS (Lambda, API Gateway, DynamoDB, Step Functions, EventBridge, SNS/SQS, etc.) is no longer the “new” thing. Most engineering orgs now sit in one of two buckets:

- They’re deep in serverless, fighting cost, cold starts, and debugging across 8 managed services.

- They’re wary because of exactly those stories and stick to ECS/EKS “just to be safe.”

Both positions often miss the underlying issue: serverless is a platform decision, not a single service choice, and most teams are not doing platform engineering intentionally.

This week’s post is about the trade-offs of “serverless by default” on AWS, and a practical way to turn your current mess (or future plans) into something reliable, observable, and cost-aware.

What’s actually changed (not the press release)

Three real shifts in the AWS ecosystem make this topic different from 2–3 years ago:

-

Serverless sprawl is now the norm

Common reality in 2026 AWS accounts:- 200–1000+ Lambda functions

- Dozens of SQS queues, SNS topics, EventBridge rules

- Fragmented CloudWatch Logs, some X-Ray, maybe a third-party APM

- Mixed IaC: CloudFormation + CDK + a bit of Terraform + manual console edits

This complexity used to be a “scale-up” problem. Now it appears in mid-sized products with 3–6 teams.

-

Pricing pressure is real

Engineer-hours are expensive, but so are:- Lambda on-vCPU pricing (especially for Java/.NET with high memory settings)

- API Gateway ($3.50/million requests vs ALB/Lambda or direct Lambda URLs)

- DynamoDB on-demand vs provisioned vs autoscaling misconfigurations

CFOs and CTOs now look at the combined AWS bill and ask, “Why is this 2–3x higher than the EC2/ECS alternative?”

-

AWS added more middle-ground options

A few key examples:- Lambda function URLs, ALB -> Lambda, HTTP APIs: cheaper/leaner than REST APIs in API Gateway.

- Aurora Serverless v2 and RDS Proxy: smoother scale than old Aurora Serverless v1 and fewer connection-hell issues with Lambda.

- Graviton across Lambda, Fargate, EC2: real cost/perf benefits if you tune memory/CPU well.

These don’t magically fix architecture, but they mean simple “serverless is always cheaper/easier” beliefs are now provably wrong in many cases.

How it works (simple mental model)

Use this mental model when designing on AWS:

Every managed service trades:

– Control for convenience

– Predictable capacity cost for request-based cost

– Debuggability for integration complexity

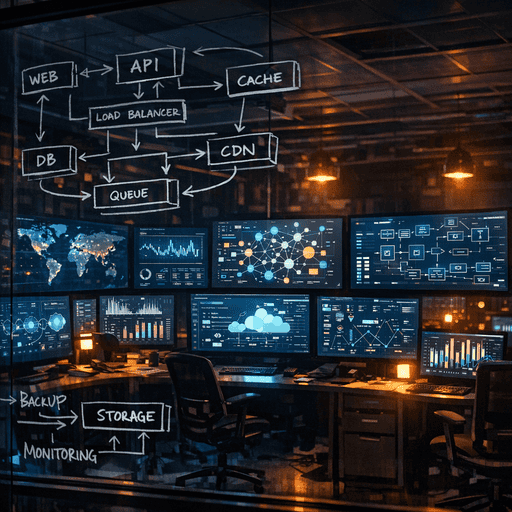

In serverless-heavy AWS setups, you are building a distributed system out of 10–15 primitives:

- Compute: Lambda, Fargate, ECS

- API edge: API Gateway (REST/HTTP/WebSocket), ALB, function URLs

- Async backbone: SQS, SNS, EventBridge, Kinesis

- State: DynamoDB, S3, Aurora, RDS

- Glue/coordination: Step Functions, EventBridge, Lambda triggers

- Observability: CloudWatch, X-Ray, OpenTelemetry collectors, third-party APM

To keep sanity, think in terms of platform layers:

-

Execution layer

Where code runs. Decide consciously:- Latency-sensitive, bursty, unpredictable → Lambda.

- Long-running, steady, streaming, CPU-heavy → Fargate/ECS or EC2.

- Stateful services (caches, in-memory state) → almost always ECS/EC2.

-

Interaction layer

How requests hit your system:- Public APIs where you need auth, throttling, and multi-tenant routing → API Gateway HTTP or REST (choose HTTP by default).

- High-volume, cheap, simpler routing → ALB or Lambda URLs with WAF in front.

-

Coordination layer

How services talk:- Fire-and-forget, high throughput, order not critical → SQS + DLQ.

- Pub/sub fan-out inside your domain → SNS → SQS pattern or EventBridge if you need schema + cross-account.

- Multi-step business workflows with human-time durations → Step Functions.

-

Observability & governance

This is the platform glue:- Standard logging contract (correlation IDs, structured logs).

- Shared tracer library (OpenTelemetry, AWS Distro) in every Lambda/container.

- Unified dashboards and SLOs for latency, error rate, cold starts, concurrency, and cost.

If you don’t define and enforce these layers, you end up with teams making different per-service decisions, and platform complexity explodes.

Where teams get burned (failure modes + anti-patterns)

1. Overusing Lambda as a universal hammer

Symptoms:

– Lambdas calling other Lambdas synchronously via API Gateway or function URLs.

– 10–15 hops for a simple user request.

– Difficult debugging and high p95 latency.

Impact:

– You pay for multiple cold starts + externalized network hops.

– Observability is fragmented, and retries across multiple hops multiply failures.

Better:

– Introduce coarser-grained services for certain domains on ECS/Fargate.

– Ring-fence Lambda for edge compute, glue code, light request handling.

2. “Invisible” concurrency and throttling

Example pattern:

– Frontend calls API Gateway.

– API Gateway -> Lambda -> DynamoDB.

– Sudden traffic spike or batch job → Lambda concurrency ramps to thousands.

Common failure:

– DynamoDB or an RDS instance gets hammered.

– Downstream throttling triggers Lambda retries and DLQ pollution.

– From the user perspective: intermittent 5xx and timeouts.

Mitigations:

– Use reserved concurrency on critical Lambdas.

– Place SQS between external triggers and heavy downstream work to smooth spikes.

– Explicitly test failure by throttling downstream (e.g., set a tiny DynamoDB RCUs/WCUs in a staging account and load test).

3. API Gateway bill shock

Real-world example:

– ~30M API calls/month via REST API Gateway.

– Lambda duration low; main cost line item is API Gateway itself.

– Switching majority of endpoints to HTTP API or ALB → 60–70% cost reduction with minimal code change.

Anti-pattern:

– Using REST APIs for internal, high-volume, simple JSON passthrough between your own services.

Better:

– Default to HTTP API for standard JSON REST where features suffice.

– Use ALB or NLB for internal service-to-service traffic when possible.

– Keep REST APIs for where you truly need advanced features (complex authorizers, request transformations, legacy constraints).

4. Observability as an afterthought

Smell:

– Everyone tails CloudWatch Logs by hand.

– No service map, no trace-based debugging.

– Latency and error rates discovered via user complaints, not dashboards.

Impact:

– Incident MTTR is dominated by “where is this failing?” rather than actual fix time.

– People start to fear changing or refactoring anything.

Minimum bar:

– Correlation ID passed from edge (API Gateway/ALB) through every hop.

– Tracing library baked into a shared Lambda/container layer.

– A platform team–owned “golden dashboard” per domain: p50/p95 latency, error rate, cold starts, concurrency, DLQ depth.

5. Ad-hoc infrastructure without a platform boundary

Pattern:

– One team uses CDK in TypeScript, another uses Terraform, some resources are console-created.

– No clear ownership of shared pieces like VPCs, IAM roles, EventBridge buses.

– IAM policies are copy-pasted “AdministratorAccess” because “it’s blocking us.”

Consequences:

– Security is hard to reason about.

– Refactors and cross-team changes become political problems.

– Compliance and audit are painful.

Fix:

– Have a platform engineering boundary:

– Platform team owns baseline networking, IAM guardrails, central logging, CT/CD templates, shared event buses.

– Product teams consume these via templates/modules, not build ad-hoc from scratch.

Practical playbook (what to do in the next 7 days)

You won’t rebuild your platform in a week, but you can set direction and fix high-ROI problems.

Day 1–2: Baseline your reality

-

Inventory your serverless footprint

- Count: Lambdas, API Gateways, Step Functions, SQS queues, EventBridge rules, DynamoDB tables.

- For each domain/product, answer: “How many hops does a typical user request take?”

-

Top 10 cost & risk hotspots

- From your AWS bill, pull:

- Top 10 Lambda functions by cost.

- Top 10 API Gateway endpoints by request volume.

- Top 5 DynamoDB tables by spend.

- For each: note if they are latency-sensitive, batch, or internal-only.

- From your AWS bill, pull:

-

Observability gap check

- Pick a single, representative user request.

- Try to follow it through logs/traces:

- Can you see end-to-end?

- Can you link all hops via a single trace/correlation ID?

- Time box this to 60 minutes; note where you get stuck.

Day 3–4: Targeted improvements

-

Quick cost win: API edge

- Identify any REST APIs used for:

- Internal-only calls.

- Simple JSON pass-through.

- Plan migration of 1–2 high-volume endpoints to HTTP APIs or ALB in the next sprint.

- Identify any REST APIs used for:

-

Concurrency and backpressure

- For the top 5 Lambdas by invocation:

- Inspect max concurrency, throttles, and errors.

- If any hits downstream databases directly:

- Add or confirm reserved concurrency.

- Consider putting SQS or Kinesis in front of heavy, non-user-facing work.

- For the top 5 Lambdas by invocation:

-

Cold start & latency hotspots

- For your top 5 latency-sensitive Lambdas:

- Check language/runtime and memory settings.

- For Java/.NET, bump memory to reduce execution time; measure cost/latency trade-off.

- Enable provisioned concurrency only where justified by p95 latency and traffic

- For your top 5 latency-sensitive Lambdas: