Your ML System Isn’t Failing Randomly — You’re Just Not Measuring the Right Things

Why this matters right now

Most teams are quietly discovering the same thing: getting a model to 90%+ offline accuracy was the easy part. Keeping it useful, safe, and cost-effective in production over months is the hard part.

Three forces are colliding:

- Models are touching real decisions: Credit limits, fraud flags, medical triage, warehouse routing. Weak monitoring here isn’t a “model quality” issue; it’s a business risk and, increasingly, a regulatory risk.

- Data distributions are no longer stable: User behavior, fraud patterns, supply chains, and even web traffic change faster than your quarterly retraining plan.

- Compute is non-trivially expensive: Both GPU-heavy deep models and “just a few more features” in feature pipelines add up materially on the cloud bill.

The industry conversation tends to get stuck on model architecture. But in production, evaluation, monitoring, drift handling, and feature pipelines drive most of the reliability, cost, and societal impact.

The hard truth: if you can’t answer “Is this model still working, for whom, and at what cost?” with numbers, you don’t have a production ML system. You have a liability.

What’s actually changed (not the press release)

Three practical changes in the last ~3 years have reshaped applied ML in production:

-

Everything is now a feedback loop

Models used to be “batch scored once a day, reviewed quarterly.” Today:

- Online experimentation (A/B, bandits) feeds into retraining.

- Human review queues are labeled data factories.

- Real-time logs are treated as model telemetry.

This means monitoring and evaluation are part of the product, not an afterthought. If you’re not closing the loop, your competitors are.

-

Observability expectations caught up to ML

Infra teams have had:

- Metrics (Prometheus, etc.)

- Traces

- SLOs and error budgets

ML has historically had:

- A spreadsheet with AUROC

- Maybe a notebook with calibration plots

That gap is shrinking. Better logging, data catalogs, feature stores, and model registries exist not because vendors want dashboards, but because:

- Regulators want audit trails.

- Security teams want to know what data flows where.

- Finance wants a line item for “inference cost per decision.”

-

The cost/performance frontier actually moved

- Cloud GPU/accelerator pricing, spot instance strategies, and better compilers (e.g., tensor optimizers, quantization, distillation) mean the same quality can be much cheaper if you invest in engineering.

- Conversely, it’s trivial to deploy a “state-of-the-art” model that multiplies your cost by 10x for a 1–2% gain on a benchmark that doesn’t reflect your real users.

The net: cost and performance are now co-designed, not separate concerns.

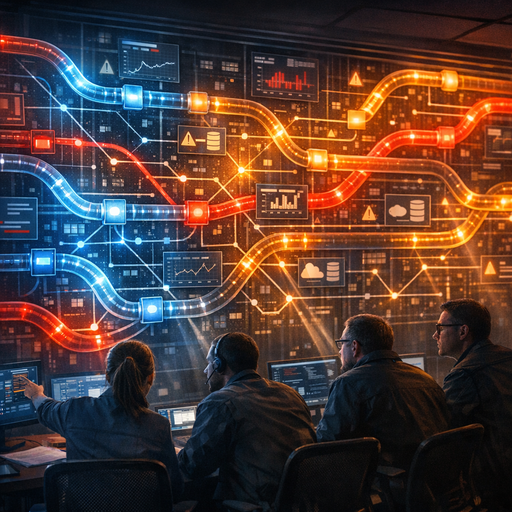

How it works (simple mental model)

Forget the vendor diagrams. For a technical decision-maker, a production ML system can be reasoned about as four loops:

-

Prediction loop (hot path)

- Input arrives → feature computation → model inference → post-processing → action/decision.

- Constraints: latency, availability, unit cost, fairness constraints, safety rules.

-

Evaluation loop (quality path)

- Collect:

(features, prediction, action, outcome, context, timestamp, user segment). - Compare predictions vs. outcomes for different cohorts and objectives.

- Report: metrics, regressions, drift, and incidents to humans.

- Collect:

-

Monitoring loop (health path)

- Online signals without waiting for labels:

- Data distribution shifts

- Feature/label volume anomalies

- Latency and error rates

- Thresholds → alerts → automated mitigations (e.g., rollback model, disable feature, switch to baseline).

- Online signals without waiting for labels:

-

Learning loop (update path)

- Ingest labeled data (ground truth from user behavior, operators, or systems).

- Retrain / fine-tune / reweight.

- Validate, compare against current model, canary deploy, then roll out.

You can scale complexity (feature stores, streaming, ensemble models), but the core questions are:

- How fast does each loop run?

- How trustworthy is each loop?

- Who owns each loop?

Most reliability and drift disasters are misalignments between these loops.

Where teams get burned (failure modes + anti-patterns)

1. “We monitor the model” (but only the wrong things)

Common anti-patterns:

- Tracking only aggregate accuracy or AUROC offline.

- No metrics by:

- Segment (new vs. existing users, regions, device types)

- Time-of-day / day-of-week

- Data source

- Treating the metric as a single number, not a distribution with confidence intervals.

Result: You miss that:

– The model degrades heavily on a minority region.

– Performance degrades under peak load when an upstream feature source is flaky.

– Specific feature drift undermines reliability before overall accuracy drops.

Fix:

– Define aligned metrics by cohort, not just global averages.

– Track: base rates, calibration, decision thresholds, and outcome-based business metrics (e.g., fraud dollars prevented vs. false positives per segment).

2. “We’ll notice when it breaks”

You probably won’t.

Example pattern from a real system (anonymized):

- E-commerce recommender system.

- Data ingestion pipeline silently dropped a new product category due to an unhandled schema change.

- Model kept serving; monitoring covered only latency and HTTP 500s.

- For 6 weeks, new category products had ~0 probability of being recommended.

- Measured KPI dipped ~3–4% but was blamed on “seasonality” until a post-mortem on missed revenue.

Where this comes from:

- Over-reliance on system metrics (latency, uptime) vs data metrics.

- No synthetic tests for extreme or edge cases.

- No checks on coverage (e.g., “What fraction of inventory/users receive non-trivial scores?”).

Fix:

– Add schema and distribution checks at:

– Raw data ingestion

– Feature computation

– Pre-inference request

– Build canary inputs and synthetic cases that must pass before deploy (e.g., a known “fraud-like” pattern that must be flagged).

3. “Drift monitoring” that measures nothing useful

Standard drift solutions often:

- Compare train vs. serving feature distributions with KS tests or PSI.

- Alarm frequently on harmless shifts (e.g., organic growth in a region).

- Fail to correlate drift with any business or quality metric.

Result: Page fatigue, muted alerts, and drift dashboards that executives glance at once a quarter.

Better framing:

Drift matters only insofar as it affects decision quality or safety/fairness.

Concretely:

- Prioritize features by:

- Their importance in the model (SHAP, permutation importance).

- Their sensitivity to real-world shifts (e.g., prices, behavioral signals).

- For top features:

- Track joint distributions and conditional distributions (e.g., user_segment × feature).

- Tie each drift alarm to:

- A specific downstream risk (e.g., “bad credit decisions for cohort X”).

- A runbook: rollback, retrain, or raise a human review threshold.

4. Feature pipelines as an afterthought

Feature pipelines quietly become:

- The biggest line item in your cloud bill (especially with wide feature sets + joins).

- A security exposure (PII flowing into logs or debug dumps).

- The main source of incidents (missing joins, stale caches, timezone mismatches).

Example pattern:

- A fraud system built over 18 months.

- Added features per every new fraud pattern, resulting in:

- 40+ feature sources

- Multiple cross-region data pulls

- Heavy point-in-time corrections for training

- Inference latency ballooned; engineers had to add aggressive caching that created staleness and subtle bugs.

- Nobody owned a holistic “cost-to-value” review of features.

Fix:

- Treat features like code:

- Ownership, tests, deprecation process.

- Periodically run:

- Feature ablation: remove features and measure impact on quality vs. cost and latency.

- Lineage review: where does each feature originate, what privacy rules apply.

5. Overfitting to “offline good, online bad”

Another observed pattern:

- Model has strong offline metrics.

- Goes to production, seems fine.

- After launch:

- Users change behavior in response to the model (e.g., they game recommendations, alter input fields).

- Upstream teams deploy changes (new registration fields, new UI flows).

- Label definitions shift (e.g., what’s “fraud” when ops changes review policy).

The model becomes misaligned without any obvious error.

Fix:

- Revisit label definitions quarterly; they are socio-technical, not static.

- Include product and ops in the evaluation loop; they see label drift before engineers do.

- Use “causal-ish” evaluation where possible (A/B or quasi-experiments) to separate:

- “The world changed”

- “…because of your model”

Practical playbook (what to do in the next 7 days)

Assume you already have at least one production ML system. Here’s a minimal, non-theoretical plan.

Day 1–2: Baseline what’s real

-

Inventory one critical model (not all of them). For that model, write down:

- Inputs → features → model → outputs → actions.

- Where it runs (services, regions), typical QPS, tail latency.

- Owner(s): code, data, operations.

-

List current metrics:

- Quality: which, how often, where computed.

- System: latency, error rates.

- Business: conversion, fraud rate, NPS, etc.

-

Ask three questions:

- How do we know this model is misbehaving right now?

- How would we roll back safely?

- Who is harmed if it degrades for a specific cohort?

If any answer is “we don’t know,” mark that as a gap.

Day 3–4: Add minimal useful monitoring

For the same model:

-

Segmented evaluation (even if offline/batch-only to start):

- Pick 3–5 key segments (region, device type, new vs. returning users).

- Compute your primary quality metric per segment, over time.

- Graph at least 3 months if you can.

-

Data health checks:

- For top 10 features by importance:

- Track mean, variance, missing rate, cardinality (for categoricals).

- Compare last 7 days vs. previous 30 days.

- Set conservative alert thresholds:

- Missing rate jump >X%

- Sharp cardinality change

- Distribution shifts on crucial features

- For top 10 features by importance:

-

Basic logging for evaluation:

- Store: prediction, key features, decision threshold, and eventual outcome (when available).

- Ensure PII handling is compliant; anonymize or hash where possible.

Day 5–6: Tackle one obvious cost/perf win

Use your observability to find a quick, concrete improvement:

-

Latency and cost:

- Identify slowest 5% of requests.

- Check:

- Are we fetching rarely-used, expensive features?

- Are we calling the model with batch size 1 when we could batch?

- Potential actions:

- Simplify or cache select features.

- Introduce micro-batching where latency allows.

- Try model quantization or a smaller distilled model for low-risk traffic.

-

Feature ablation mini-experiment:

- In a shadow or offline setting, remove an expensive feature set.

- Measure:

- ΔQuality

- ΔLatency / ΔCompute cost

- If loss is negligible, plan to remove it in next sprint.

Day 7: Decide on one structural upgrade

From what you’ve learned, pick one structural investment for the next quarter:

- A basic model registry and versioning discipline.

- A move from ad-hoc feature scripts to a shared feature pipeline with ownership.

- A formal incident runbook for the ML system (with business and ops involvement).

- A dedicated ML evaluation job (nightly or hourly) with alerting to Slack/PagerDuty.

Write it down, assign an owner, and define a success metric (e.g., “MTTD of data issues drops from weeks to hours”).

Bottom line

Production ML is no longer just a technical curiosity; it’s infrastructure that shapes credit access, job screening, content visibility, fraud detection, and more. That makes evaluation, monitoring, drift handling, and feature pipelines part of your organization’s social contract, not just your architecture diagram.

The uncomfortable reality:

- Most “ML incidents” are data and process incidents.

- Most “algorithmic harm” is unmeasured performance on the wrong cohorts.

- Most “ML cost overruns” are feature pipeline and model bloat, not core innovation.

You don’t need a dozen new tools to fix this. You need:

- Clear ownership of the four loops (prediction, evaluation, monitoring, learning).

- Metrics that connect model behavior to user impact and cost.

- A culture that treats ML systems like any other critical distributed system: observable, testable, and debuggable.

If your team can’t explain how your model might fail, who it would hurt, and how you’d detect that within hours, then your problem isn’t accuracy—it’s governance. And in 2024, that’s a tech and society problem, not just an engineering one.