Your ML Model Isn’t Broken — Your Production Loop Is

Why this matters right now

Everyone “has ML in production” now. But look closer and most setups fall into one of these buckets:

- The model worked in the A/B test and quietly degraded over 6–18 months.

- The infra is overbuilt (Kafka + Feast + feature store + 3 monitoring vendors) yet nobody can answer “Did model performance get worse last week?”

- The cloud bill is driven by “ML inference” and no one can explain why p95 latency doubled after that “small” feature change.

What’s changed in the last 18–24 months isn’t magic models. It’s volume and blast radius:

- More decisions are fully automated (pricing, routing, content ranking, fraud flags).

- Models are updated more often (weekly/daily vs yearly).

- Data distributions are moving faster (user behavior, bots, competitors, regulation).

If you’re a tech lead or CTO, your risk isn’t “missing out on GenAI.” It’s:

- Silent performance regressions that only show up as NPS drops or churn 3 months later.

- Cost blow-ups from unbounded inference, fan-out feature calls, and oversized models.

- Compliance and security exposure from unmonitored input/output behavior.

This post is about the boring but critical pieces: evaluation, monitoring, drift, feature pipelines, and cost/performance trade-offs—as they actually behave in production.

What’s actually changed (not the press release)

Three concrete shifts have raised the bar for applied machine learning in production:

-

Feedback cycles are shorter and noisier

- Online products now push experiments continuously.

- Marketing, UX, sales, pricing, and ML all nudge the same KPIs.

- Attribution got harder: when conversion drops by 3%, is it the model, a new UX flow, or seasonality?

Result: You can’t rely on a monthly offline evaluation and a yearly retrain. You need ongoing, model-specific signal.

-

Operational complexity moved from “training” to “serving + data”

Training used to be the big scary batch job. Now:

- Models are smaller, cheaper, and easier to train.

- The messy parts are:

- Keeping features consistent between training and serving.

- Managing drift in upstream data and downstream labels.

- Coordinating many small models and rules that interact.

The ML system is now a live service with state, dependencies, and SLOs—not a one-off artifact.

-

Cost/performance trade-offs are now first-order

- Embeddings, rerankers, and LLMs get used in more request paths.

- Latency budgets are tight; concurrency is high.

- Inference cost is often 20–60% of infra spend for ML-heavy products.

Running “the best model” isn’t responsible if it violates latency SLOs or burns 3× the budget for 0.3% relative lift.

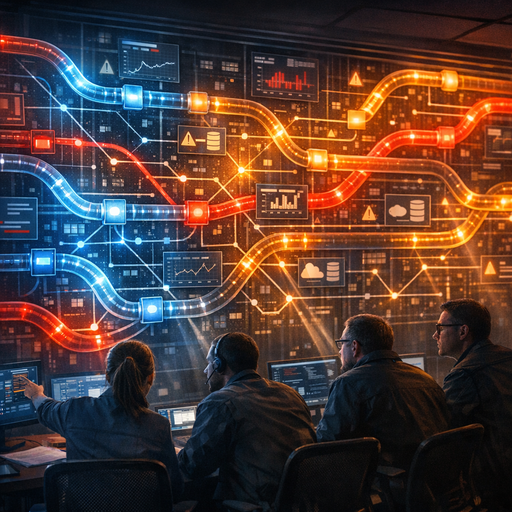

How it works (simple mental model)

Forget the usual “train / validate / deploy” triangle. For production ML, a more useful mental model is a closed loop with five stages:

- Data → Features

- Model → Inference

- Decisions → User/System Impact

- Outcomes → Labels / Proxies

- Evaluation → Monitoring → Change

1. Data → Features

- Sources: event logs, transactional DBs, third-party APIs, user input.

- Transform: join, aggregate, encode, normalize.

- Output: features with clear definitions and contracts.

Key properties:

- Determinism: Same input → same features in training and serving.

- Latency awareness: Some features are cheap and real-time; others are delayed by hours/days.

- Lineage: You can answer, “What raw data generated this feature?”

2. Model → Inference

- A model is just a function:

y_hat = f(features, parameters). - In production:

- It runs under latency and availability constraints.

- It must handle bad inputs: nulls, out-of-range, new categories, adversarial requests.

- It should emit telemetry (inputs, outputs, timing, version).

3. Decisions → User/System Impact

The model output isn’t the impact. Something consumes it:

- A business rule engine (e.g., thresholds, safety checks).

- Downstream services (e.g., ranking, pricing, routing).

- Human-in-the-loop workflows (e.g., fraud analyst review queues).

This layer is often where cost and risk accumulate:

- Increasing model call fan-out (e.g., N rerankers per page).

- Combining multiple models without understanding interactions.

4. Outcomes → Labels / Proxies

Most production systems don’t get clean, immediate labels.

Examples:

- CTR models: label only when a user sees and clicks (or not).

- Fraud: label sometimes arrives weeks later after chargebacks.

- Recommendation quality: proxies like session length or repeat visits.

You end up with:

- Delayed labels: Evaluate on a sliding window of “matured” data.

- Partial labels: Only some decisions ever get labeled.

- Proxy metrics: Need validation that they correlate with real business outcomes.

5. Evaluation → Monitoring → Change

Two distinct but related loops:

- Evaluation (slow loop):

- Offline: periodic batch jobs compare model predictions vs labels on historical data.

- Online: experiments compare variants by business KPIs.

- Monitoring (fast loop):

- Feature distributions, input schemas, error rates, latency, and output ranges.

- Alert when things move outside expected bounds or prior patterns.

Change is then:

- Retraining or fine-tuning.

- Rolling out a new version or fallback model.

- Adjusting feature computation.

- Updating business logic on top.

All the scary production failures happen because one of these five stages is treated as “set and forget.”

Where teams get burned (failure modes + anti-patterns)

Failure mode 1: “We monitor the business KPI, so we’re fine”

Pattern:

- Team deploys a model.

- Product monitors signups, revenue, NPS.

- Nobody tracks precision/recall, calibration, or ranking quality.

What goes wrong:

- Model degrades; another part of the system compensates (discounts, more traffic, manual ops).

- By the time the business KPI visibly dips, the regression is months old and entangled with many other changes.

Anti-pattern:

- Treating ML like a black-box feature and delegating responsibility to “overall product health.”

What to do instead:

- Track model-centric metrics (e.g., AUC, lift vs baseline, coverage, calibration error) separately from product KPIs.

- Alert on model metrics deviating from a historical band, even if business metrics look stable.

Failure mode 2: “We have drift alerts, but nobody trusts them”

Pattern:

- Team sets up KL divergence / PSI / KS test alerts on every feature.

- Dashboards show daily “red” drift alarms.

- Either:

- People tune them until they never fire, or

- People ignore them entirely.

What goes wrong:

- Real distribution shifts get buried in noise:

- Seasonality (weekends vs weekdays).

- Product launches.

- Region mix changes.

Anti-pattern:

- Over-monitoring individual features without connecting to model performance or business context.

What to do instead:

- Distinguish:

- Operational drift: schema changes, missing features, obvious pipeline bugs.

- Statistical drift: change in feature or prediction distributions that might matter.

- Only page humans on:

- Schema / contract violations.

- Large shifts in predictions and key features that correlate with known performance changes.

- Periodically backtest: “When drift was high in the past, did performance change?” If not, down-weight that signal.

Failure mode 3: Training-serving skew and “shadow bugs”

Pattern:

- Features are computed:

- One way in offline batch for training.

- Another way in online services for inference.

- Minor differences: time windows, defaults, encoding, null handling.

What goes wrong:

- The model looks great offline but underperforms in production.

- Edge cases (missing values, new categories) behave differently online vs offline.

- Debugging requires diffing two subtly different pipelines.

Anti-pattern:

- Separate teams owning training code vs serving code, with no shared library or contracts.

What to do instead:

- Use shared feature definitions:

- Same code or at least same tests for training and serving.

- Contract tests on derived features: given a frozen input snapshot, training and serving produce identical outputs.

- Log features + predictions for a sample of production traffic.

- Periodically replay them through the training-time stack to detect skew.

Failure mode 4: Ignoring cost/performance until finance calls

Pattern:

- Team picks the best model on offline metrics.

- Inference is “fast enough” in early tests.

- Traffic grows; new use cases reuse the same model.

- p95 latency creeps, infra cost climbs, SLOs become fragile.

What goes wrong:

- Emergency “optimize it” work: caching, quantization, pruning, moving to GPUs/TPUs.

- Pressure to roll back to a simpler baseline model.

Anti-pattern:

- Evaluating models only on quality metrics without a cost-per-unit-lift mindset.

What to do instead:

- Treat cost/latency as first-class metrics during model selection.

- For each candidate: measure QPS, p95/p99 latency, and $/1K predictions on realistic loads.

- Maintain multiple models:

- A cheap baseline with predictable behavior.

- One or more expensive specialists used only where lift justifies cost.

- Add simple guardrails:

- Rate limits per tenant/use case.

- Budget caps for expensive paths.

Practical playbook (what to do in the next 7 days)

Assume you already have at least one ML model in production. Here’s a pragmatic 7-day plan for applied ML reliability and observability.

Day 1–2: Inventory and “truth table”

- List your production models:

- For each: purpose, owner, major inputs, key outputs.

- For each model, write a 1-page “truth table”:

- What decision does this model influence?

- What is the primary performance metric for the model?

- Where do labels come from, and with what delay?

- What is the latency budget and target $ per 1K predictions?

If you can’t answer those, you’ve found your first risk item.

Day 3: Basic telemetry

For each model service:

- Ensure you log, at minimum:

- Model version.

- A hash or ID of the feature payload (to join later without logging PII-heavy raw features if needed).

- Prediction/score.

- Latency and errors.

- For a sampled subset (e.g., 0.1–1% of traffic), log:

- Full features (subject to privacy and compliance).

- The downstream decision (e.g., “approved”, “rejected”, “shown at rank 3”).

You need this to debug, replay, and evaluate.

Day 4: Simple monitoring, no ML magic

Set up concrete checks:

- Schema checks:

- Required features present.

- Types and value ranges as expected.

- Operational metrics:

- Error rate, timeouts, queue length, latency.

- Output sanity:

- Distribution of scores: mean, std, and out-of-range or NaN.

- Volume per category (for classifiers): sudden drops/spikes.

Start with static thresholds and simple alerts. Most issues are operational, not subtle drift.

Day 5: “Matured label” evaluation job

Pick one important model and:

- Define a label maturation window (e.g., 7 days post-prediction).

- Build a daily batch job that:

- Joins past predictions (from logs) with outcomes where labels have matured.

- Computes model metrics: AUC, log loss, precision/recall, ranking metrics—whatever is appropriate.

- Store the results in a time-series table or dashboard.

You now have a minimal offline evaluation pipeline based on actual production behavior.

Day 6: Drift checks that matter

Extend evaluation to include:

- Prediction drift:

- Compare today’s score distribution to a 30-day reference window.

- Key feature drift:

- Pick 5–10 features known to be important in the model.

- Track mean, std, and percentiles; add a simple distance metric (e.g., PSI or KS p-value).

Wire up warning-level alerts (not pages) when:

- Prediction distribution shifts significantly.

- Key features move outside historical bands.

Review these alerts together with performance changes from Day 5’s job. Over time, you’ll learn which drifts correspond to real impact.

Day 7: Cost / performance sanity review

For your top 1–3 models by traffic or business impact:

- Measure:

- Average and p95/p99 latency under typical load.

- Infra cost per 1K predictions (rough estimate is fine).

- Compare:

- Quality vs a simpler baseline (if you have one).

- Quality vs a smaller or quantized version (if available).

Decide:

- Is the current model overpowered? (Too expensive for marginal lift.)

- Do we need a tiered strategy? (Cheap model for most, expensive model only when ambiguous.)

- Do we need rate limits or budgets to avoid surprise bills?

Write down clear guardrails, even if you don’t implement all the changes immediately.

Bottom line

Applied machine learning in production is no longer about who has models—it’s about who has a stable, observable loop from data to decisions to outcomes and back.

You don’t need a feature store, a drift SaaS, or a “real-time AI platform” to get the essentials right. You do need:

- Shared feature definitions and contracts.

- Telemetry that ties predictions to later outcomes.

- Separate but connected monitoring of:

- Operational health,

- Statistical behavior,

- Model performance.

- An explicit view of cost and latency as first-class model metrics.

If your team can answer, with evidence:

- “Is this model still doing its job?”

- “What changed when this metric moved?”

- “What’s the cost of this extra 1% lift?”

…you’re ahead of most organizations claiming to be “AI-driven.” The rest is just implementation detail.