Serverless on AWS Is Cheap Until It Isn’t: A Pragmatic Guide to Cost & Reliability

Why this matters this week

AWS serverless (Lambda, API Gateway, EventBridge, DynamoDB, Step Functions, SQS, etc.) is now old enough that many teams are hitting second‑generation problems:

- “Our Lambda bill exploded and we’re not sure why.”

- “Cold starts and throttling only show up under real traffic.”

- “Observability is a mess across 20+ services.”

- “We built ourselves into a corner with too many functions and events.”

This isn’t about “should we go serverless?”; most teams already have. The question is:

Can you run a large AWS serverless estate with predictable cost, reliability, and debuggability?

This week is a good time to revisit your patterns because:

- Many orgs have just closed or are closing fiscal years and are staring at AWS cost reports.

- AWS has quietly improved knobs around concurrency, logging, and cost visibility.

- Platform teams are being asked to standardise “how we do serverless” instead of every squad improvising.

If you don’t put some engineering discipline around this, serverless becomes the new microservices: conceptually elegant, operationally expensive.

What’s actually changed (not the press release)

A few shifts in the last 12–18 months materially affect how you should design serverless systems on AWS:

-

Concurrency and scaling controls are now good enough to be dangerous

- Per‑function reserved concurrency and provisioned concurrency are widely used.

- Concurrency scaling limits can prevent downstream meltdowns but also create subtle throttling and backpressure.

- It’s now easier to “tune for performance” and accidentally 5–10x your spend.

-

CloudWatch and X-Ray got incrementally better, but complexity outpaced them

- You can trace through Lambda, API Gateway, Step Functions, SQS, DynamoDB, but:

- High-cardinality logs and traces are still expensive.

- It’s easy to turn on rich logging everywhere and get a surprise bill.

- Most shops still don’t have a coherent observability strategy for serverless; they have scattered dashboards and ad-hoc metric alarms.

- You can trace through Lambda, API Gateway, Step Functions, SQS, DynamoDB, but:

-

Costs are shifting from compute to glue

- Lambda itself often isn’t your top line item; data transfer, API Gateway (especially REST), Kinesis, and logging can dominate.

- Features like Lambda Extensions and more generous memory configs push developers to “just bump memory” for performance, further raising cost.

-

Platform engineering is becoming the owner of “serverless standards”

- Instead of each team wiring Lambda + X + Y themselves, platform teams are building:

- Terraform/CDK blueprints.

- Standard logging/metrics/tracing layers.

- Guardrails for concurrency, retries, and DLQs.

- The pain moved from individual apps to cross-cutting operational concerns.

- Instead of each team wiring Lambda + X + Y themselves, platform teams are building:

What would change this picture?

- If AWS made pricing and cost forecasting more transparent (e.g., first-class “per-request cost breakdown” for a flow), some of the current detective work would vanish.

- If open telemetry support became truly first-class and consistent across all serverless services, a lot of ad-hoc logging patterns would go away.

Right now, those aren’t here in a fully satisfying way, so you need intentional patterns.

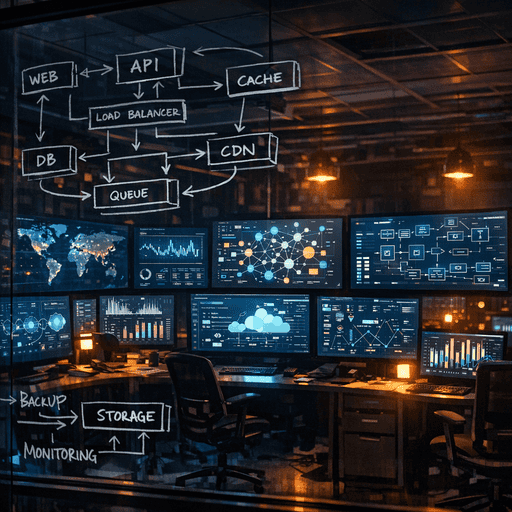

How it works (simple mental model)

Use this mental model when designing or reviewing your AWS serverless architecture:

Three planes: Execution, Flow, and Visibility. Costs and failures leak across all three.

-

Execution plane (where code runs)

- Lambda, Fargate, Step Functions tasks, containerised workloads.

- Key concerns:

- Concurrency and scaling limits.

- Cold starts and runtime choice.

- Per‑invocation latency and memory/CPU trade‑offs.

- Cost drivers:

- Duration × memory/CPU.

- Provisioned concurrency.

- Extensions and language choices (e.g., Java overhead vs. Node/Python).

-

Flow plane (how work moves)

- EventBridge, SQS, SNS, Kinesis, API Gateway, AppSync, Step Functions.

- Key concerns:

- Fan-out/fan-in patterns.

- Backpressure and retries.

- Ordering and idempotency.

- Cost drivers:

- Requests/invocations.

- Data volume and retention.

- Cross‑region traffic and DLQs.

-

Visibility plane (what you can see and control)

- CloudWatch Logs, metrics, X-Ray, custom tracing, third-party APM.

- Key concerns:

- Sampling, cardinality, and retention.

- Alert signal vs. noise.

- Traceability across async boundaries.

- Cost drivers:

- Log volume and retention period.

- High-cardinality metrics.

- Always-on tracing.

If something is “mysteriously” expensive, it’s usually because decisions in one plane (e.g., verbose logging, high fan-out) weren’t accounted for in the others.

Example mental walk-through

A real incident pattern from a fintech org:

- Execution plane: Lambda processing webhook events; memory bumped to 1 GB to avoid timeouts.

- Flow plane: Lambda publishes one message per event to multiple SQS queues + EventBridge.

- Visibility plane: Each invocation logs full payload + downstream responses at INFO.

Result:

- Lambda duration dropped 40% (good), but:

- Lambda cost up ~2x (more memory, and more aggressive concurrency).

- CloudWatch Logs tripled in cost.

- SQS + EventBridge charges doubled due to fan-out plus retries.

The optimisation was local (latency) but not global (TCO). That’s the model in action.

Where teams get burned (failure modes + anti-patterns)

1. “Infinite” fan-out with no backpressure

Pattern:

- One event comes in, gets fanned out via EventBridge or SNS to N consumers.

- Each consumer might trigger further events, Step Functions, or Lambdas.

Failure modes:

- Cascading retries: one downstream service flaps; retries wave through the system.

- Cost shock: a small increase in upstream volume multiplies exponentially.

Anti-pattern smell:

- Diagrams with lots of arrows and “async for decoupling” as the only justification.

Mitigation:

- Set strict per‑consumer concurrency limits.

- Use SQS buffers between critical producers and fragile consumers.

- Define SLOs that include “downstream dependency failure behaviour.”

2. “Log everything” without retention or sampling strategy

Pattern:

- Every Lambda logs entire request/response and full stack traces at INFO.

- Logs retained for 90–365 days “for debugging/compliance.”

Failure modes:

- CloudWatch Logs eclipses Lambda cost.

- Searching logs during incidents becomes slow and noisy.

Anti-pattern smell:

- Application logs contain entire payloads of PII or large JSON blobs repeatedly.

Mitigation:

- Define log levels and what’s allowed at each.

- Structured logging with field whitelists.

- Short retention (7–14 days) for verbose logs; archive only necessary aggregates.

3. Over-fragmented functions (“nano‑functions”)

Pattern:

- Dozens or hundreds of tiny Lambdas, each doing trivial work in a Step Functions/queue pipeline.

Failure modes:

- High coordination overhead; each hop adds latency and cost.

- Hard to reason about end-to-end behaviour.

- Observability is fragmented across many functions.

Anti-pattern smell:

- Entire business flows drawn as 10+ Lambda icons in sequence, each <50 lines of code.

Mitigation:

- Merge tightly coupled steps into a single function where it simplifies failure semantics.

- Use Step Functions sparingly for real workflow logic, not just linear delegation.

4. Unbounded concurrency on “cheap” upstreams

Pattern:

- API Gateway or EventBridge triggers Lambda with very high concurrency limits.

- Downstream is RDS, an internal HTTP service, or a third-party API.

Failure modes:

- Downstream resource spikes, hits connection limits, or gets rate-limited.

- Lambda retries amplify the overload.

Anti-pattern smell:

- “Serverless scales automatically” used as an excuse not to design for capacity.

Mitigation:

- Set reserved/max concurrency per Lambda.

- Add SQS in front of constrained downstreams to smooth bursts.

- Enforce and test rate limits toward internal and external dependencies.

5. Platform “abstractions” that hide AWS semantics

Pattern:

- Internal framework wraps Lambda/events with “simple” decorators or codegen.

- Developers don’t see or think about retries, timeouts, or payload sizes.

Failure modes:

- Unexpected retries causing duplicate side effects.

- Payloads silently truncated or dropped on the floor.

- Undocumented coupling to AWS limits.

Anti-pattern smell:

- Internal SDKs without clear documentation of underlying AWS behaviours.

Mitigation:

- Keep abstractions thin and leaky by design; surface AWS error modes.

- Provide templates and guardrails, not magic.

Practical playbook (what to do in the next 7 days)

Assume you already have a decent-sized AWS serverless estate. Here’s a focused, one‑week plan.

Day 1–2: Baseline cost and hot paths

-

Identify top 10 most expensive components

- Group by:

- Lambda functions (by cost and by total duration).

- API Gateway (REST vs HTTP).

- EventBridge, SQS, Kinesis.

- CloudWatch Logs.

- Output: A simple table with service, monthly cost, owner team.

- Group by:

-

Map 3–5 critical user/business flows

- For each: draw execution + flow planes:

- Entry point (API Gateway / event).

- All Lambdas, queues, streams, DBs touched.

- Mark:

- Where retries happen.

- Where fan-out occurs.

- Where external dependencies are called.

- For each: draw execution + flow planes:

Day 3: Concurrency and backpressure review

-

Review concurrency settings

- For each Lambda in critical flows:

- Check reserved concurrency.

- Check provisioned concurrency.

- Ask:

- Does this match downstream capacity?

- Are we paying for provisioned concurrency that we don’t need 24/7?

- For each Lambda in critical flows:

-

Introduce explicit backpressure where missing

- If any flow goes directly from API Gateway/EventBridge → Lambda → RDS/critical internal API:

- Consider SQS + Lambda as a buffer.

- Or set a reasonable reserved concurrency to cap load.

- If any flow goes directly from API Gateway/EventBridge → Lambda → RDS/critical internal API:

Day 4: Logging and observability hygiene

-

Classify log usage

- For each major function/service:

- What’s being logged at INFO?

- Log retention policy?

- For each major function/service:

-

Quick wins

- Reduce retention for non-critical logs to 7–30 days.

- Remove payload dumps from INFO; move to DEBUG with sampling.

- Add minimal structured fields: requestid, flowid, tenant_id (if multi-tenant), outcome.

-

Tracing

- Ensure