Serverless on AWS Without Surprises: Designing for Cost, Reliability, and Observability

Why this matters this week

AWS serverless (Lambda, API Gateway, EventBridge, Step Functions, DynamoDB, SQS, SNS) is no longer “experimental” for most orgs. It’s a core part of production stacks, especially in cost-sensitive and spiky workloads.

What’s changed recently isn’t that serverless suddenly got cheaper or faster in a headline-grabbing way. It’s that:

- More teams are hitting second- and third-order effects:

- Difficult debugging across asynchronous services

- Cost blow-ups from “cheap” components used at scale

- Latency and concurrency surprises

- Platform teams are being asked to provide serverless as a product to internal users, not as a side quest.

- Finance and security are now scrutinising “we’ll just use Lambda” the same way they scrutinise Kubernetes clusters.

If you run workloads on AWS, you need a governance model and some concrete patterns, not vibes. This post outlines a mental model, failure modes, and a one-week playbook to make your serverless architecture more predictable on cost, reliability, and observability.

What’s actually changed (not the press release)

Recent AWS changes that materially affect how you design and operate serverless systems:

-

Pricing and cost visibility are clearer—but still trap-filled

- Lambda: tiered pricing and duration-based billing are well understood, but:

- More teams are hitting data transfer and cross-service costs, not Lambda runtime costs.

- DynamoDB, S3, and Step Functions can dominate your serverless bill if you model data and workflows naively.

- Cost allocation with tags and cost categories is more widely adopted, so serverless cost anomalies are now visible to finance, not just ops.

- Lambda: tiered pricing and duration-based billing are well understood, but:

-

Reliability features exist, but require configuration

- SQS + Lambda partial batch response improves failure handling but:

- Many teams still run with defaults and process huge batches monolithically, amplifying blast radius.

- EventBridge and Step Functions have grown features (DLQs, retries, circuit-breaker like patterns), but:

- They don’t help if you never configured them or if you wrap everything in one giant happy-path workflow.

- SQS + Lambda partial batch response improves failure handling but:

-

Observability tooling is maturing, but fragmented

- CloudWatch Logs Insights, X-Ray, and embedded metrics + traces from Lambda can give good visibility, but:

- Out-of-the-box telemetry is not enough; you still need consistent correlation IDs and structured logging.

- Distributed tracing across serverless and container workloads is now feasible, but:

- Many teams haven’t standardised a trace ID propagation contract, so traces break at integration boundaries.

- CloudWatch Logs Insights, X-Ray, and embedded metrics + traces from Lambda can give good visibility, but:

-

Platform engineering expectations have shifted

- Internal platforms are now expected to give:

- Golden paths for event-driven design

- Pre-baked observability and guardrails

- Cost controls and policies for environments/teams

- “Just let teams create any Lambda they want” no longer flies at orgs with tens of accounts and hundreds of services.

- Internal platforms are now expected to give:

How it works (simple mental model)

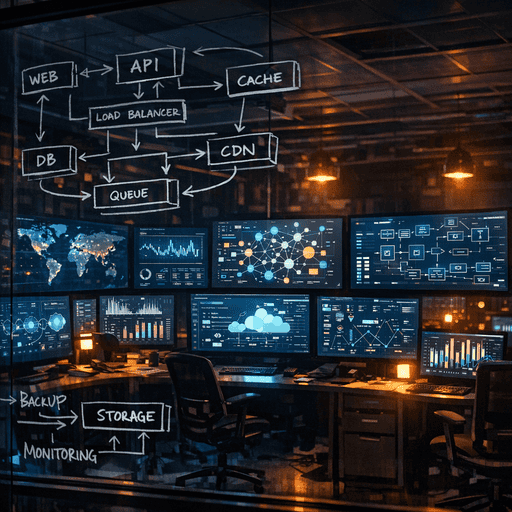

Treat serverless on AWS as three interlocking systems:

- Compute fabric (Lambda, Fargate-on-demand bits)

- Event fabric (API Gateway, EventBridge, SQS, SNS, Kinesis)

- State fabric (DynamoDB, S3, RDS, Step Functions state, caches)

Designing robust systems is about controlling contracts and blast radius across these three fabrics.

A useful mental model:

-

Sync edge, async core

- At the edges (APIs, user-facing operations), you typically:

- Use API Gateway + Lambda or ALB + Lambda/Fargate

- Need predictable latency and clear error semantics

- Inside the core:

- Use events (EventBridge, SQS, SNS, Kinesis) to decouple producers/consumers

- Accept eventual consistency and design for idempotency

- At the edges (APIs, user-facing operations), you typically:

-

Fan-in/fan-out control

- Downstream systems should never be surprised by upstream throughput.

- Mechanisms:

- SQS + Lambda with max concurrency limits

- Reserved concurrency or provisioned concurrency on hot paths

- Throttling at API Gateway and EventBridge rules where needed

-

Data access is the real coupling

- Whether compute is Lambda or containers, the real coupling is in:

- Data model (how many tables/buckets/secrets you touch)

- Access patterns (chatty vs coarse-grained)

- For cost and reliability:

- Prefer fewer, well-designed access patterns to DynamoDB and S3

- Consider read/write amplification when splitting functions

- Whether compute is Lambda or containers, the real coupling is in:

-

Every async boundary is a failure boundary

- Every message, event, and step can:

- Fail transiently (retries help)

- Fail permanently (DLQs or parking lots needed)

- Succeed but violate expectations (semantic errors, hidden data quality issues)

- Design for:

- Observability at each boundary

- Explicit error-handling paths, not “we’ll monitor CloudWatch alarms”

- Every message, event, and step can:

Where teams get burned (failure modes + anti-patterns)

-

Lambda sprawl with no boundaries

- Symptom:

- Hundreds of Lambdas, each with slightly different IAM, runtime, and logging behaviour.

- Impact:

- Security review pain, inconsistent observability, hard-to-predict costs.

- Anti-pattern:

- Each team handcrafts their own CDK/CloudFormation for functions.

- Fix:

- Provide a “Lambda module” or blueprint (Terraform, CDK construct, or internal platform API) with:

- Standard IAM patterns

- Logging and metrics baked in

- Sensible concurrency and timeout defaults

- Provide a “Lambda module” or blueprint (Terraform, CDK construct, or internal platform API) with:

- Symptom:

-

Over-using Step Functions as a catch-all workflow engine

- Symptom:

- Massive state machines that implement business logic, error handling, and routing in YAML/JSON.

- Impact:

- High Step Functions costs (state transitions), brittle workflows that are hard to test and version.

- Example:

- A fintech batch pipeline that modelled every validation and enrichment as a separate Step Functions task. Cost and latency exploded under growth; debugging required replaying whole workflows.

- Fix:

- Use Step Functions sparingly:

- Orchestrate coarse-grained tasks, not every micro-step.

- Keep business logic in code, workflows for “glue” only.

- Use Step Functions sparingly:

- Symptom:

-

Naive DynamoDB usage

- Symptom:

- 20+ tables for related data, random partition keys, and high RCU/ WCU costs.

- Impact:

- Hot partitions, throttling, unpredictable costs.

- Example:

- A SaaS product used a per-tenant table pattern without understanding partition key distribution. One enterprise tenant’s usage caused throttling and retries across their entire shard of Lambdas.

- Fix:

- Design for:

- Access patterns first (what queries exist?)

- Reasonable partition key cardinality

- Single-table design where it reduces cross-table joins and calls

- Design for:

- Symptom:

-

Event-driven without event contracts

- Symptom:

- EventBridge and SNS carry loosely-structured JSON that changes frequently.

- Impact:

- Consumers break silently, data quality issues hard to detect.

- Example:

- An ecommerce company changed an order event payload format; downstream analytics Lambdas silently dropped events for hours due to unhandled schema differences.

- Fix:

- Treat events like APIs:

- Versioned schemas (even if just disciplined JSON schemas in code)

- Backwards-compatible changes

- Contract tests between producers and consumers

- Treat events like APIs:

- Symptom:

-

Observability bolted on later

- Symptom:

- When something fails, teams grep CloudWatch Logs by hand; no end-to-end trace.

- Impact:

- MTTR measured in hours or days; blame falls on “serverless is opaque.”

- Fix:

- From day one:

- Correlation ID in headers and all logs

- Structured logs (JSON)

- Consistent metric names and dimensions

- Sampling strategy for traces to avoid cost blow-up

- From day one:

- Symptom:

-

Ignoring cold starts and concurrency limits until launch

- Symptom:

- Everything passes QA; production launch causes random 1–3 second spikes and throttling.

- Impact:

- User-facing latency, partial outages.

- Fix:

- For latency-sensitive paths:

- Consider provisioned concurrency or warming strategies

- Hard-test with realistic traffic patterns

- Set per-function reserved concurrency to avoid noisy neighbours

- For latency-sensitive paths:

- Symptom:

Practical playbook (what to do in the next 7 days)

Assuming you already run serverless workloads on AWS, here’s what’s worth doing immediately.

Day 1–2: Establish visibility and cost baselines

-

Tag and attribute costs

- Ensure Lambda, Step Functions, API Gateway, DynamoDB, and SQS resources are tagged by:

- System or product

- Environment (prod/stage/dev)

- Team/owner

- In your cost tooling, generate:

- Top 10 serverless services by monthly cost

- Top 10 functions by cost and invocation count

- Ensure Lambda, Step Functions, API Gateway, DynamoDB, and SQS resources are tagged by:

-

Instrument a single golden path properly

- Choose one important user flow (e.g., “create order”).

- Ensure:

- A correlation ID is generated at edge (API Gateway/ALB) and propagated through all Lambdas and async messages.

- Logs are structured and include the correlation ID.

- You can trace the full path using your APM/tracing stack or X-Ray.

Day 3–4: Guardrails and contracts

-

Define event contracts for one critical event stream

- Pick a high-value stream (orders, payments, user lifecycle).

- Document:

- Event schema and semantics

- Versioning strategy (e.g.,

type+versionfields) - Backwards-compatibility rules

- Add tests:

- Producer tests compliant with this schema

- Consumer tests that validate they handle current and next schema version

-

Implement standard Lambda module/blueprint

- Create one reusable pattern (via CDK/Terraform/module) with:

- Standard IAM role with least-privilege pattern

- Logging config (JSON, correlation ID middleware)

- Timeout, memory, and concurrency defaults

- Optional DLQ or on-failure destination

- Migrate 1–2 non-critical Lambdas as a pilot.

- Create one reusable pattern (via CDK/Terraform/module) with:

Day 5–6: Cost and reliability hardening

- Spike on two cost hot spots

- From your cost report, take top two expensive components:

- For DynamoDB:

- Validate access patterns; see if you’re over-fetching or doing multiple round-trips that could be coalesced.

- For Step Functions:

- Check if you’re using too many fine-grained steps instead of coarser

- For DynamoDB:

- From your cost report, take top two expensive components: