Your Data Governance Story is the Hardest Bug in Your AI Stack

Why this matters right now

Most AI talk is about model quality and cost per 1K tokens. The real production risk is usually somewhere else:

- “What data did this model see?”

- “Who approved this prompt template?”

- “Can we prove we deleted that customer’s data?”

- “Why did the model leak internal code in that answer?”

You can duct-tape a prototype with “don’t send PII” and a few redaction regexes. Once you:

- expose AI to real customer data,

- let it read internal systems,

- or let non-engineers design workflows,

you’re in data governance and model risk management territory, whether you like it or not.

The constraints are tightening:

- Customers now add AI-specific clauses to DPAs and MSAs.

- SOC2/ISO auditors are starting to ask about LLMs explicitly.

- Security teams are blocking AI deployment until they get answers on retention and auditability.

- Regulators (GDPR, sector-specific) are starting to notice when LLMs create shadow data copies.

If you treat “AI governance” as slideware, you’ll spend the next year firefighting incidents and retrofitting controls into brittle pipelines.

What’s actually changed (not the press release)

Three practical shifts have raised the bar for privacy and governance in AI systems:

1. Your inference boundary moved

Before LLMs, “data processing” usually meant:

- You control the code, runtime, and data store.

- You can point to deterministic transformations.

With hosted LLMs and vector DBs:

- Data leaves your VPC more often.

- Third-party models may retain logs or use data for training unless you opt out.

- “Inference” might flow through multiple vendors (proxy, router, model, monitoring).

Impact: Data flow diagrams that used to be one box are now 6+ boxes and 3 companies. Every hop is a governance decision.

2. Models are probabilistic, but obligations are not

Security/compliance language is binary:

- “We do not store PII in logs.”

- “We delete user data within 30 days.”

- “Models cannot reveal secrets.”

LLMs are not binary:

- Redaction can fail on edge cases.

- A model can reconstruct something sensitive from context.

- Fine-tuned models can “remember” outliers even if you “deleted the record.”

Impact: Your traditional policy-as-doc doesn’t map cleanly; you need policy-as-code with confidence bounds and explicit residual risk.

3. Non-engineers can now program the system

Prompt templates and “no-code” builders effectively let:

- Ops teams define routing logic.

- PMs wire data sources into prompts.

- Analysts instruct agents to take actions in SaaS tools.

This is power, but also:

- New attack surface: prompt-based data exfiltration.

- Drift in behaviour with zero code changes.

- Hard-to-audit “programs” living in UI configs, not git.

Impact: Change management, approvals, and auditability must extend to prompts, flows, and policies—not just code.

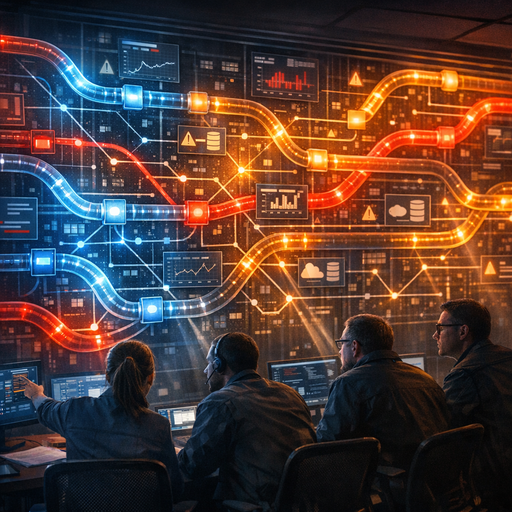

How it works (simple mental model)

Use this as a mental model to reason about privacy & governance in your AI stack:

1. Data classes → 2. Policy rules → 3. Enforcement planes → 4. Evidence

1. Data classes: know what you’re dealing with

At minimum, define:

- Public: can leave your environment; no restrictions.

- Internal: limited to employees; not customer-specific.

- Sensitive: customer data, internal code, financials, etc.

- Regulated: anything under GDPR, HIPAA, PCI, sector rules.

Then refine by:

- Identifiability: PII, quasi-identifiers, anonymous.

- Longevity: retention constraints (e.g., 30, 90, 365 days).

- Location constraints: must stay in-region / in-VPC.

Mechanism: A data classification schema you can tag in:

- Schemas / DB tables

- Message metadata (Kafka, queues)

- File storage prefixes / object tags

- Prompt metadata

2. Policy rules: what’s allowed, where

Translate your SOC2/ISO/privacy statements into simple rules:

- “Sensitive + Regulated must not leave the primary cloud region.”

- “Anything marked Regulated cannot be used for model training.”

- “Access to Sensitive must be logged with user and purpose.”

Write them in a machine-readable form (policy-as-code). The shape looks like:

yaml

rule: "no_pii_to_third_party_llm"

when:

data_class: ["PII", "Regulated"]

destination: ["external_vendor"]

enforce:

action: "block"

log: true

notify: "security_oncall"

This is the bridge between abstract policy (“we protect PII”) and concrete enforcement.

3. Enforcement planes: where reality happens

You’re enforcing in three places:

-

Data plane – what physically flows where

- Redaction / anonymization before calling models

- VPC / private endpoints / peering

- Tenant isolation by customer or project

-

Control plane – who can configure what

- RBAC on prompt templates and tools

- Approval workflows for changing data access

- Enforced defaults (e.g., “no retention” unless overridden)

-

Governance plane – how you prove it

- Central log of “who accessed what data with which model”

- Config history for prompts, tools, and policies

- Periodic checks (e.g., “show all flows that send PII to vendor X”)

Think of it like Kubernetes:

- Data plane: pods and traffic.

- Control plane: API server and controllers.

- Governance plane: admission webhooks, audit logs, policy reports.

4. Evidence: because “we think so” won’t pass an audit

“Trust us” doesn’t work for:

- Customers doing vendor due diligence.

- Auditors assessing SOC2/ISO controls.

- Regulators asking about a specific incident.

Evidence should be:

- Queryable: “show all prompts that touched user 123 last quarter.”

- Immutable: append-only logs; no silent edits.

- Explainable: human-readable events: who, what, when, why.

Without this, you can’t do root-cause analysis when a model leaks internal data in a user response.

Where teams get burned (failure modes + anti-patterns)

1. “We’ll fix governance after we prove value”

Pattern: ship pilots with real data under the promise of “we’ll harden it later.”

Failure modes:

- Pilot becomes production by accretion.

- No visibility on what’s been logged, cached, or used for later fine-tuning.

- Retroactive data deletion becomes nearly impossible.

Anti-pattern smell: no written list of which systems the pilot can touch.

2. Magical thinking about “no training” flags

Pattern: “The vendor says they don’t train on our data; we’re safe.”

Problems:

- That doesn’t address:

- Log retention.

- Subprocessors used for inference.

- Support access to logs.

- It also doesn’t cover your downstream copies (vector DB, caches, traces).

Anti-pattern smell: DPIA or data flow diagram is just a one-line “vendor X API.”

3. Treating prompts like disposable strings

Pattern: prompts live in code or UI forms with no:

- Versioning

- Review

- Ownership

Problems:

- A “minor tweak” suddenly exposes internal ticket data to a public model.

- Prompts quietly embed sensitive config (API keys, internal URLs).

- You can’t reconstruct what the system was doing at incident time.

Anti-pattern smell: you can’t answer, “Who last changed this prompt?”

4. Vector search without retention thinking

Pattern: “We just drop embeddings into a managed vector DB.”

Problems:

- Embeddings may be reversible or at least re-identifiable.

- No TTLs set; old data lives forever.

- Multi-tenant indexes mix customers with no isolation.

Anti-pattern smell: same vector index for sandbox, staging, and prod.

5. Disconnect between security and product

Pattern: security team writes a generic “AI policy,” but:

- Product/ML teams build flows with zero reference to that policy.

- No shared vocabulary for risk levels or data classes.

- Friction leads to bypasses and “shadow AI” projects.

Anti-pattern smell: security hears about AI launch from the marketing site.

Practical playbook (what to do in the next 7 days)

Assume you already have at least one AI-based system live or close to it. Within a week, you can move from vibes to mechanism.

1. Inventory: “What’s live and what data does it touch?” (Day 1–2)

For each AI-powered feature or workflow:

- List:

- Data sources (DBs, APIs, file stores)

- Destinations (models, vector DBs, logs, monitoring)

- Users (internal, external)

Answer concretely:

- Does PII flow through this?

- Does internal code/content flow through this?

- Which vendors see that data?

Capture this in a simple table; don’t wait for a perfect diagram.

2. Minimal data classification & tags (Day 2–3)

Define 3–4 classes:

- PUBLIC, INTERNAL, SENSITIVE, REGULATED

Then:

- Tag:

- DB tables / collections.

- Buckets / folder prefixes.

- Key message types in queues.

- If nothing else, maintain a CSV mapping:

resource → classification.

This gives you a language for policy-as-code later.

3. Set explicit retention defaults (Day 3–4)

Decide, for each data class in AI flows:

-

Model API:

- Are you using a “no retention” option? If not, why?

- If vendor stores logs, what’s the retention period?

-

Vector DB:

- Default TTL for embeddings per class.

- Per-tenant separation vs shared indexes.

-

Logs/telemetry:

- Are prompts/responses stored?

- Are you redacting PII before logging?

Document decisions in a short “AI data retention” note, even if imperfect. This becomes the starting point for SOC2/ISO alignment.

4. Wrap one policy in code (Day 4–5)

Pick one high-leverage policy and enforce it in code:

Example: “No SENSITIVE data to third-party LLMs.”

Implementation ideas:

-

A middleware layer before any LLM call that:

- Checks data classification tags in the request metadata.

- Blocks or routes to an internal model if class is SENSITIVE.

- Logs an event with user + reason.

-

A static check or CI hook that:

- Flags direct calls to third-party LLMs bypassing the gateway.

This doesn’t need to be a full policy engine; thin glue is fine. The point is: move one important sentence from docs to code.

5. Put prompts under change control (Day 5–6)

Minimum viable governance:

-

Store prompts in:

- Git, or

- A config service with versioning.

-

Require:

- Owner per prompt.

- At least 1 reviewer for changes that touch SENSITIVE or REGULATED sources.

- Automatic logging of prompt version ID in telemetry for each request.

You’ve effectively turned “UI strings” into configuration with an audit trail.

6. Run one incident simulation (Day 6–7)

Pick a scenario:

“A customer claims our AI assistant exposed their internal ticket details to another tenant.”

Walk through:

- What logs would you query?

- Can you link outputs to:

- Model used

- Prompt version

- Data sources accessed?

Note the gaps:

- Missing correlation IDs?

- No record of data sources per request?

- No prompt version logs?

Use the findings to prioritize your next sprint items.

Bottom line

AI privacy and governance is not about writing an “AI ethics” doc and hoping your LLM behaves. It’s about:

- Defining what data you have and how risky it is.

- Encoding simple rules in code and infrastructure.

- Ensuring every model interaction leaves a trail you can explain.

The main blockers are usually:

- Lack of shared vocabulary (data classes, risk levels).

- Overreliance on vendor marketing instead of explicit contracts and controls.

- Prompts and workflows treated as ephemeral instead of governed configuration.

If you can:

- Answer “what data flows where” for each AI feature,

- Show one concrete policy enforced in code,

- And reconstruct a specific interaction from logs,

you’re already ahead of most organizations shipping AI today.

Everything else—fine-grained policy engines, fancy governance dashboards, AI-specific certifications—is an iteration on top of those basics, not a substitute for them.