The Unsexy AWS Work That’s Quietly Cutting Cloud Bills by 30–50%

Why this matters this week

AWS bills are spiking again.

Across a handful of teams I’ve spoken with in the last month:

- One mid-size SaaS company cut their AWS bill by 42% in 10 weeks without changing customer-facing features.

- A fintech reduced p99 latency by ~35% while moving off an overbuilt EKS cluster to a mostly serverless stack.

- A consumer app dropped monthly operations incidents by half by putting disciplined SLOs and observability around their serverless components.

None of these results came from a magic AWS announcement. They came from boring but high-leverage engineering:

- Dropping “default everything into Lambda” as a religion.

- Building a minimal platform layer around AWS instead of copy-pasting CDK stacks across repos.

- Treating cost as an SLO-adjacent signal, not a finance afterthought.

This week’s post is about practical AWS cloud engineering: combining serverless where it’s strong, EC2/Fargate where it’s not, and a platform engineering layer to keep cost and reliability from drifting out of control.

The delta between “we use AWS” and “we use AWS competently” is often 30–50% of your infra bill and a large fraction of your incident load.

What’s actually changed (not the press release)

None of this is new tech. What has changed recently:

-

Serverless isn’t “cheap by default” anymore

- Lambda, DynamoDB, API Gateway, Step Functions are mature and heavily used.

- Patterns that were “good enough” at low scale are now expensive at moderate scale:

- Always-on concurrency in Lambda.

- High-cardinality metrics logs in CloudWatch.

- DynamoDB hot partitions due to naive keys.

- The pricing model punishes “chatty” architectures.

-

AWS cost tooling is finally usable by engineers

- Cost Explorer, CUR (Cost & Usage Report), and tagging controls are just about good enough to wire into your platform:

- Engineers can see service-level cost per team / per environment.

- You can correlate cost spikes with deploys and traffic patterns.

- The gap is now less “we can’t see” and more “we haven’t wired this into our workflows”.

- Cost Explorer, CUR (Cost & Usage Report), and tagging controls are just about good enough to wire into your platform:

-

Platform engineering has become the de facto answer to “too many AWS knobs”

- Most orgs with >5 teams are:

- Standardising on 2–3 golden patterns (HTTP microservice, async worker, scheduled job).

- Shipping these as Terraform/CDK modules or internal templates.

- The win is not “Kubernetes everywhere” but fewer decisions at the edge:

- Same logging, same tracing, same IAM posture, same alarms.

- Most orgs with >5 teams are:

-

Reliability expectations have gone up while tolerance for ops headcount has gone down

- Customers expect “always on”.

- Leadership expects infra/ops teams to shrink, not grow.

- That pushes you toward:

- Managed services where it actually reduces toil.

- But with tight guardrails, observability, and disaster scenarios rehearsed.

In practice, this means AWS cloud engineering this year is about governance and patterns, not new primitives.

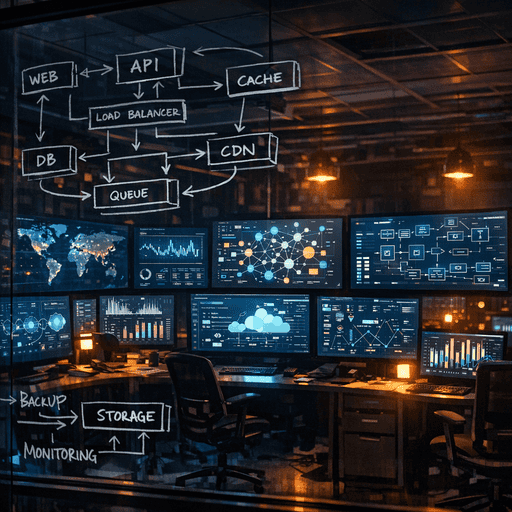

How it works (simple mental model)

A useful mental model: three lanes for AWS workloads.

-

Lane 1: Event-driven serverless (Lambda-first)

Use when:- Traffic is spiky or low-to-medium volume.

- Work is I/O bound, short-lived, and scales with events.

- You can tolerate cold starts or design around them.

Best fits:

- Webhooks, ingestion endpoints, lightweight APIs.

- Stream processors (Kinesis, Kafka, DynamoDB Streams).

- Workflow glue (Step Functions orchestration).

-

Lane 2: Long-lived services (Fargate/EC2/EKS)

Use when:- You have steady or high volumes.

- Latency is sensitive (p99 matters).

- There’s heavy CPU/memory usage or long-running connections.

Best fits:

- High-traffic APIs and backends.

- Real-time bidirectional communication (WebSockets, gRPC).

- Analytics workers, ML inference services with predictable load.

-

Lane 3: Data & batch (managed data services + batch runners)

Use when:- Data set sizes are large (100s GB+), operations are complex.

- Workloads are periodic, batch-like, or ETL-heavy.

Best fits:

- Redshift/Aurora for analytics + OLTP.

- EMR / Glue / managed Spark for large ETL.

- ECS-on-Fargate batch jobs or AWS Batch for heavy processing.

Then overlay three cross-cutting concerns:

- Reliability: SLOs, multi-AZ, retries, backoff, idempotency.

- Observability: Metrics, logs, traces, and structured correlation IDs.

- Cost: Per-team budgets, per-service dashboards, anomaly alerts.

Your job as a platform or infra team is to:

- Standardise each lane:

- One or two default stacks per lane.

- Baked-in logging, metrics, tracing, IAM, and deployment.

- Make lane-switching deliberate:

- Moving from Lambda to Fargate or vice versa is:

- Globally documented, with thresholds (QPS, p95, cost).

- Reviewable design decision, not ad-hoc fire-fighting.

- Moving from Lambda to Fargate or vice versa is:

Where teams get burned (failure modes + anti-patterns)

1. “Lambda all the things” beyond its cost envelope

Pattern:

- Start with Lambda + API Gateway for everything.

- Traffic grows to 500–2,000 RPS across many endpoints.

- Costs climb and you start hitting concurrency limits and noisy neighbours.

Symptoms:

- Bill spikes: API Gateway + Lambda becomes more expensive than running a small Fargate/EKS cluster.

- Latency jitter at p95/p99, especially with cold starts and VPC networking.

- Throttle errors when concurrency caps are hit and scaling lag kicks in.

What’s better:

- Keep Lambda for:

- Spiky workloads, integrations, and low-traffic services.

- Move sustained, high-traffic APIs to:

- ALB + Fargate or EKS, with autoscaling and right-sized instances.

Real example:

- B2B SaaS analytics API:

- At ~700 RPS peak, Lambda/API Gateway cost ~3x a small Fargate setup.

- Moving to Fargate cut infra cost by ~60% and improved p99 by ~30%.

2. “EKS as a platform” with no platform

Pattern:

- Team deploys EKS “to standardise everything”.

- Each app team configures its own:

- Ingress, autoscaling, secrets, logging, sidecars.

Symptoms:

- Inconsistent security posture:

- Some pods run as root, some not. Some have wide IAM permissions via IRSA.

- Observability chaos:

- Different log formats, inconsistent metrics.

- Huge ops overhead:

- Cluster upgrades are painful because everything is custom.

What’s better:

- Treat EKS as an implementation detail of your platform, not the platform itself.

- Provide:

- A small set of Helm charts or templates.

- Pre-baked sidecars/Daemons for logging/metrics.

- Central decisions on ingress, TLS, and secrets.

Real example:

- Mid-size marketplace:

- Migrated from “DIY EKS” to an internal “Service Template”.

- App teams only answer: CPU/mem, autoscaling hints, environment variables.

- Incident load for infra dropped ~40%; services became much more uniform.

3. Zero cost visibility until finance yells

Pattern:

- No enforced tags.

- No dashboards connecting services to spend.

- Cost is reactively reviewed monthly/quarterly.

Symptoms:

- Unattributed spend (20–40% of bill in “unallocated”).

- Difficult to justify optimisations because you can’t connect:

- “This product feature” → “This AWS line item”.

What’s better:

- Enforce minimal tagging via SCPs / CI checks:

team,service,env,cost_center.

- Pipe CUR into a data store and:

- Build basic dashboards: cost by team, cost by service, cost per environment.

- Set anomaly alerts with engineering in the loop, not just finance.

Real example:

- Fintech with ~30 services on AWS:

- After tagging and simple cost dashboards, discovered:

- Abandoned test environments costing mid-5 figures annually.

- Clean-up + right-sizing shaved 28% off the monthly bill without performance hits.

- After tagging and simple cost dashboards, discovered:

4. Observability bolted on to serverless

Pattern:

- Teams adopt Lambda, DynamoDB, SQS, Step Functions.

- Observability is left to CloudWatch defaults and console clicking.

Symptoms:

- Hard-to-debug incidents:

- No tracing across Lambda → DynamoDB → external APIs.

- Slow MTTR:

- Need to stitch logs by hand using timestamps and guesswork.

- No clear SLOs:

- Hard to know what “good” looks like per user-facing workflow.

What’s better:

- Define platform-wide observability contracts:

- Standard logging schema (correlation IDs, user IDs where applicable).

- Default traces for all HTTP handlers and async handlers.

- Minimal SLOs per customer-facing API or workflow.

- Bake it into:

- Lambda layers or base containers.

- CDK/Terraform modules.

Practical playbook (what to do in the next 7 days)

This assumes you already run some mix of AWS serverless and containerised workloads.

Day 1–2: Baseline and tags

-

Enforce minimal tagging for all new resources:

team,service,env,cost_center.- Add CI/CD checks that fail infra changes without tags.

-

Build three simple dashboards (CloudWatch or your tooling of choice):

- AWS cost by

team. - AWS cost by

service(top 20). - Error rate and latency (p95) for your top 5 customer-facing APIs.

- AWS cost by

Day 3–4: Classify workloads into lanes

-

Inventory your top 10 most expensive services (or most critical if you don’t know cost yet):

- For each, classify into Lane 1 (Lambda), Lane 2 (Fargate/EKS/EC2), Lane 3 (data/batch).

- Check if the lane matches:

- Traffic profile (spiky vs sustained).

- Latency requirements.

- Cost behaviour.

-

Identify 2–3 “wrong-lane” cases:

- Lambda APIs running at