Your Serverless Bill Is Lying to You: The Hidden Cost Centers on AWS

Why this matters this week

If you’re running on AWS and using Lambda, Fargate, or EventBridge in production, you’re probably looking at the wrong numbers.

What’s changed lately is not a single feature launch, but the emergent behavior of modern AWS stacks:

- More services per workload (Lambdas, Step Functions, SQS, EventBridge, DynamoDB, Kinesis, API Gateway).

- More “platform” layers (internal developer platforms, golden paths, shared VPCs, cross-account networking).

- More aggressive FinOps pressure as budgets tighten.

The result: teams think they’re “serverless and cheap,” but are:

- Paying 2–5x more than needed on IO, NAT, and inter-service chatter.

- Building fragile latency chains that degrade unpredictably under load.

- Lacking end‑to‑end traceability, making incidents slow and expensive.

This isn’t about shaving cents off Lambda duration. It’s about:

- Architectural patterns that silently multiply costs.

- Reliability SLOs being violated by design, not by bugs.

- Observability gaps that make root cause analysis a multi-team sport.

This week is a good time to reassess whether your AWS serverless architecture still matches your current scale, traffic shape, and org structure—not the slide deck from your first migration.

What’s actually changed (not the press release)

AWS has shipped a lot in the last 12–18 months, but the most business-relevant change for cloud engineering isn’t any one feature. It’s the composite behavior of these shifts:

-

Cheaper, more flexible compute options

- Graviton and ARM Lambda/Fargate are now mainstream.

- Lambda pricing tweaks (e.g., tiered free, new runtimes) make “lift & shift to Lambda” more palatable.

- Reality: most teams haven’t revisited their runtime or memory settings in years.

-

More pervasive private networking

- “Everything in a VPC” is now the default reflex.

- VPC Lattice, PrivateLink, and service connect patterns encourage internal-only endpoints.

- Reality: NAT gateway and cross‑AZ data transfer charges quietly dominate bills.

-

Better (optional) observability primitives

- AWS X-Ray, CloudWatch Logs Insights, and distributed tracing support are good enough for serious use.

- Structured logging and correlation IDs are supported, not exotic.

- Reality: many teams still treat Lambda logs as a best-effort dump, not an operational asset.

-

Platform engineering becoming a real thing

- Internal platforms now define how teams use Lambda, ECS, DynamoDB, etc.

- Self-service templates and “golden paths” lock in patterns—good or bad.

- Reality: bad patterns get institutionalized and cost/reliability issues scale with headcount.

In short: the tools matured, but a lot of architectures didn’t. The gap shows up in your CloudWatch dashboards and AWS bill, not in the press releases.

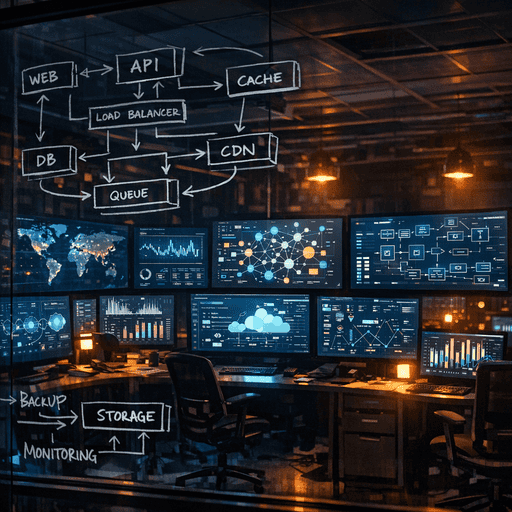

How it works (simple mental model)

Use this mental model to reason about AWS serverless cost & reliability:

1. Every hop has a tax

Each time a request crosses:

- A process boundary (Lambda → Lambda),

- A network boundary (Lambda → VPC → NAT → external API),

- Or a service boundary (API Gateway → Lambda → DynamoDB),

…you pay three taxes:

- Latency tax – p99/p999 grows faster than you expect.

- Failure tax – more partial failures, timeouts, and retries.

- Money tax – per‑request charges, data transfer, NAT, inter‑AZ.

If you can collapse two hops into one without losing clarity or isolation, you usually should—especially for synchronous paths.

2. Cost is dominated by “edges,” not “compute”

In many modern systems:

- Lambda/Fargate compute is <30% of cost.

- The rest is:

- NAT gateways

- Data transfer (inter‑AZ, cross‑region, to internet)

- API Gateway, Step Functions, EventBridge invocations

- DynamoDB and S3 request charges at high throughput

Serverless ≠ cheap if your architecture sprays data across AZs and services for every request.

3. Reliability comes from queues and idempotency, not just retries

SQS, SNS, and EventBridge are your main tools for:

- Decoupling components

- Smoothing load (burst → steady)

- Preventing cascading failures

But they only work if:

- Your handlers are idempotent.

- You design for at-least-once delivery.

- You have dead-letter queues (DLQs) that someone actually monitors.

4. Observability is a graph problem, not a log problem

If you think:

“We’ll just log everything from Lambda and grep it”

…you’ve already lost.

You need:

- A way to follow a single request across:

- API Gateway

- Lambdas / ECS tasks

- SQS / EventBridge

- Datastores

- Aggregate views of:

- p95+ latency per user-facing action

- Error rates per edge, not just per service

Think in terms of graphs of spans and events, not log lines.

Where teams get burned (failure modes + anti-patterns)

1. NAT gateway as a silent profit center

Pattern:

- “For security, everything goes in private subnets behind NAT.”

- Lambdas and Fargate tasks call public APIs (payments, third-party SaaS).

- High volume, chatty integrations.

Symptoms:

- NAT charges quietly grow to become a top 3 line item.

- Spikes in third-party API traffic = monthly bill surprises.

Mitigations:

- Use VPC endpoints for AWS services where possible.

- For SaaS, explore PrivateLink or VPC peering if supported.

- For pure outbound HTTP, consider a small, horizontally scaled egress proxy with fixed cost instead of massive NAT use.

2. Death by micro-Lambda

Pattern:

- Every minor step in a workflow becomes its own Lambda.

- Orchestration done via Step Functions, EventBridge, and SQS.

- Synchronous workflows still chain 5–10 Lambdas in series.

Symptoms:

- Hard-to-debug latency issues (which hop is slow?).

- Complex IAM and deployment mapping.

- CloudWatch full of partial traces that don’t tell the whole story.

Mitigations:

- For synchronous request/response:

- Group logically related work into a single Lambda until you hit:

- Memory/runtime limits, or

- Clear domain/service boundaries.

- Group logically related work into a single Lambda until you hit:

- Reserve “micro” functions for:

- Truly independent async tasks.

- Cross-domain utilities with stable contracts.

Real-world example:

A payments team split “validate → authorize → persist → notify” into four Lambdas for a synchronous API. p95 latency was ~1.2s and hard to tune. Collapsing to one Lambda (with internal modules) cut p95 to ~350ms, reduced failure modes, and simplified alarms.

3. Async without discipline: EventBridge spaghetti

Pattern:

- EventBridge used as the default glue between everything.

- “Just emit an event” turns into 20+ consumers over time.

- No canonical schema registry or versioning policy.

Symptoms:

- Hard to predict blast radius of a schema change.

- Hard to reason about who depends on which events.

- Debugging user‑visible issues requires chasing events across multiple busses.

Mitigations:

- Treat events as public contracts, not internal DTOs.

- Maintain:

- A simple catalog of events, producers, and consumers.

- Versioning rules and deprecation timelines.

- Use EventBridge for:

- Cross‑domain communication and decoupling.

- Use SQS/Kinesis for:

- High-throughput pipelines inside a domain.

Real-world example:

A marketplace platform emitted OrderCreated with payloads shaped like their internal ORM. When they changed the DB schema, three downstream services broke in subtle ways. Moving to explicit event contracts and a registry avoided further incidents and allowed graceful evolution.

4. “Production-ish” observability

Pattern:

- Lambda logs go to CloudWatch; no structured logging.

- X-Ray only turned on for a subset of functions.

- No correlation ID propagated across hops.

Symptoms:

- Incidents require ad-hoc querying across multiple log groups.

- Partial outages (one slow dependent) appear as “random timeouts.”

- SLOs can’t be measured end-to-end.

Mitigations:

- Emit structured logs (JSON) with:

request_id,user_id(where appropriate),trace_id,span_id.

- Use tracing (X-Ray or third-party) with:

- Consistent propagation headers through every hop.

- Define 2–3 user‑centric SLOs first, then instrument to meet them.

Real-world example:

A travel booking company migrated from monolith to serverless but kept “print-style” logs. A subtle DynamoDB throttling issue took 8 hours to identify. After standardizing correlation IDs and traces, similar issues were diagnosed in under 20 minutes.

Practical playbook (what to do in the next 7 days)

You don’t need a replatform. You need a focused review. Suggested plan:

Day 1–2: Map and measure

-

Traffic & topology snapshot

- Choose 1–2 critical user flows (e.g., “checkout,” “sign up”).

- Draw the end-to-end path:

- API Gateway → Lambdas/ECS → Queues/Events → Datastores → External APIs.

- Count hops and note which are:

- Cross‑AZ

- Cross‑account

- Internet/NAT bound

-

Cost breakdown for those flows

- For last 30 days, pull:

- Lambda cost (by function)

- API Gateway/ALB cost

- SQS/EventBridge/Step Functions invocations

- NAT gateway and data transfer

- Roughly allocate to those flows (even if via sampling).

- For last 30 days, pull:

Deliverable: a single-page diagram with annotations like “~$X/month for this path” and “~Yms p95 here.”

Day 3–4: Attack the worst offenders

Focus on 2–3 changes with obvious ROI:

-

NAT & data transfer

- Identify Lambdas/ECS tasks doing high-volume outbound calls.

- Prioritize:

- Adding VPC endpoints for AWS services they hit.

- Moving read-heavy external calls to:

- Caching

- Precomputation

- Or a smaller number of controlled egress points.

-

Function consolidation